Today, artificial intelligence (AI) is considered a key technology in automation. It imitates human learning and decision-making processes and opens up new avenues for process optimization, quality improvement, and energy efficiency. The most successful approach is machine learning (ML), which detects patterns and correlations in sample data.

Beckhoff consistently integrates this technology into the PC-based control world: With TwinCAT 3 Machine Learning, AI is becoming an integral part of machine control.

This results in an open, integrated hardware and software ecosystem that enables AI models to run directly on the PLC – without external systems or specialized knowledge.

Learn how Beckhoff bridges the gap between data collection, training, and real-time inference – and how you can integrate AI directly into your control system with TwinCAT 3 Machine Learning.

AI applications in industrial automation – recognizing new potential

Artificial intelligence opens up new avenues in machine and system engineering for increasing quality, productivity, and efficiency. It complements classic control and automation concepts wherever conventional algorithms reach their limits, such as in cases of high variance, complex data patterns, or processes that are difficult to model.

AI systems learn from examples, recognize correlations independently, and make data-based decisions. This makes machines more adaptive, precise, and predictive.

Computer vision: Machines see and understand

Automated visual quality control is one of the most important and challenging areas of AI application in industry. While classic image processing algorithms solve well-defined tasks, such as length measurements or edge checks, AI-based methods reveal their strengths when dealing with natural variance and irregular patterns, where conventional rules fail.

Signals and time series: Machines hear and feel

In addition to visual data, time-based signals form the basis of many industrial AI applications. Current, pressure, vibration, or temperature curves provide information about the state of processes, components, and tools. AI models detect patterns and deviations at an early stage, enabling predictive maintenance, process optimization, and anomaly detection in real time.

From data to application

Beckhoff offers a continuous workflow from data acquisition to real-time execution – fully integrated, open, and without lock-in effects. With its open system, Beckhoff allows specific requirements to be met using toolboxes and functions from the TwinCAT modular system. This also applies to existing system infrastructures that are not based on Beckhoff products.

See below to find out more about the options that Beckhoff can offer for the workflow outlined on the right.

Application examples for artificial intelligence in control systems

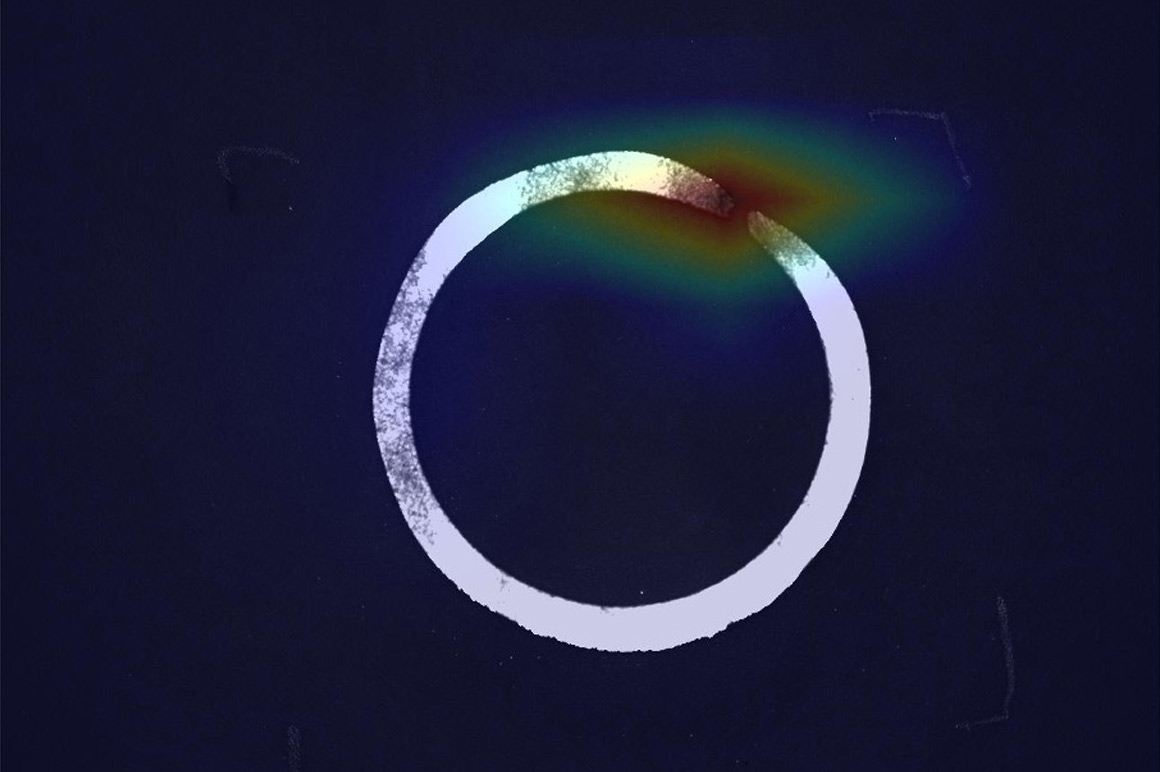

In the discrete production of metallic workpieces, the geometric shape is often a key quality feature. In addition to metric measurement methods to assess a workpiece quantitatively, qualitative statements (such as the classic categorization into OK and non-OK) are often sufficient.

A representative data set of approx. 200 images was recorded and saved using the TwinCAT Vision library. The data was annotated as OK and non-OK, whereby various different error patterns were summarized together as non-OK. With TE3850 TwinCAT 3 Machine Learning Creator, an image classification model could be trained based on this data set, which can predict whether a workpiece is OK or not OK in more than 95% of the cases considered – without any AI expert knowledge.

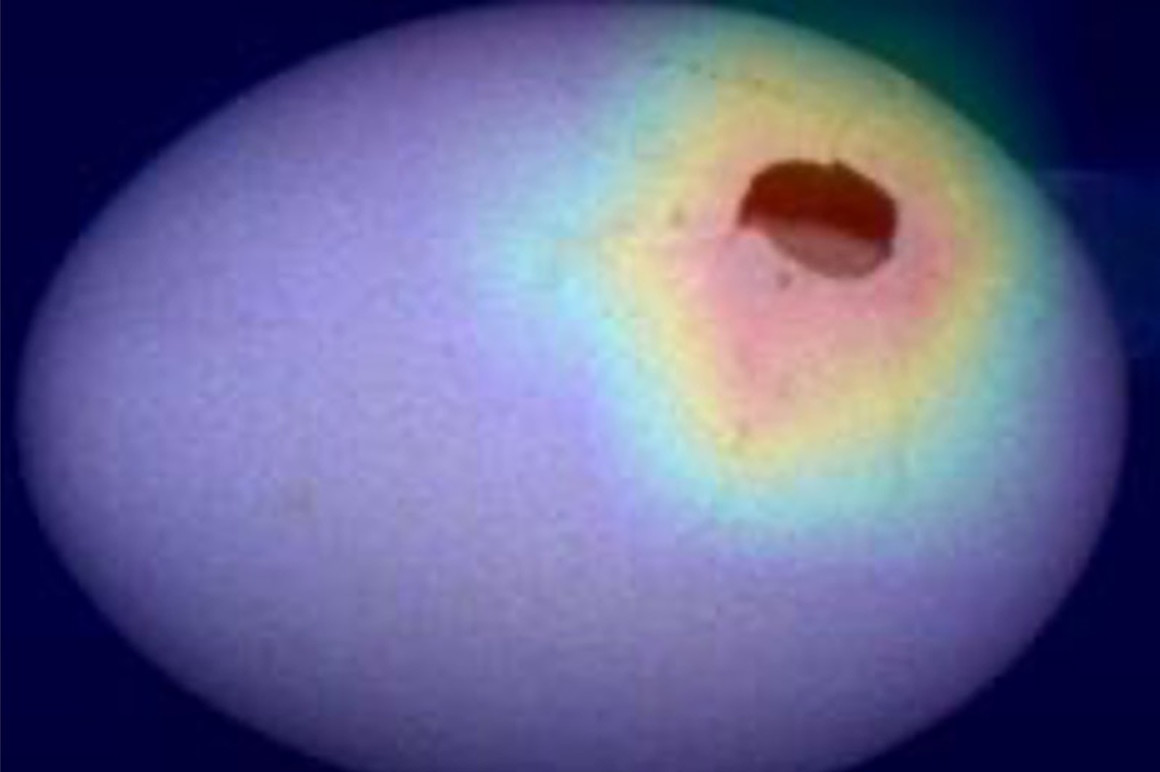

Automation in the food industry contributes to the efficient and resource-saving supply of a wide variety of foods. One challenge is the automated sorting of foodstuffs, as these have a high natural variance compared to artificially produced products. In the context of eggs, for example, these should automatically be sorted into the categories OK, dirty, and broken. For this purpose, 200 images were taken with these three classes and annotated. An AI model that can correctly classify an egg in more than 90% of the cases considered was built with the TE3850 TwinCAT 3 Machine Learning Creator. Using the explainability methods for AI models included in the product, it was easy to find out that misclassifications occurred especially in marginal areas from OK to dirty. This made it immediately clear what measures needed to be taken to improve the model: either provide more sample data in the boundary area between OK and dirty, or define the boundary more cleanly by revising the existing annotations.

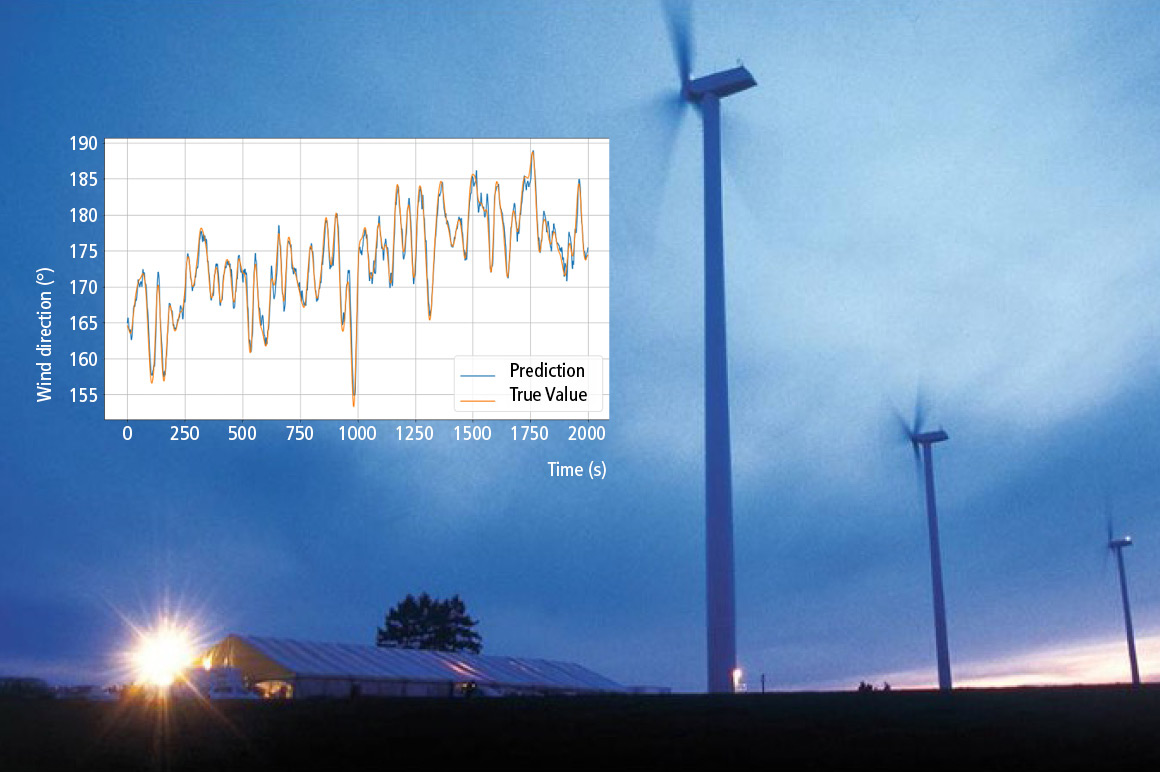

Wind turbines are a key component in the transition to renewable energies. They supply clean, electrical energy, which they obtain from the kinetic energy of the wind. Knowing both the wind direction and the wind speed is crucial for the efficiency of the system. The rotor attached to the nacelle is aligned with the wind direction according to the wind direction. As for the pitch of the rotor blades, this is adjusted according to the wind speed so that the turbine is operated as constantly as possible at its rated output.

Wind direction tracking and pitch adjustment are relatively slow, which means that the future wind direction and speed have to be estimated in order to move the turbine predictively to the optimum orientation.

Based on wind data collected from real wind turbines, an AI model was created that is able to estimate wind direction and wind speed values 10 to 20 seconds in the future with an acceptable margin of error. This is based entirely on past wind values. The created model can be easily integrated into TwinCAT with the TF3810 TwinCAT 3 Neural Network Inference Engine.

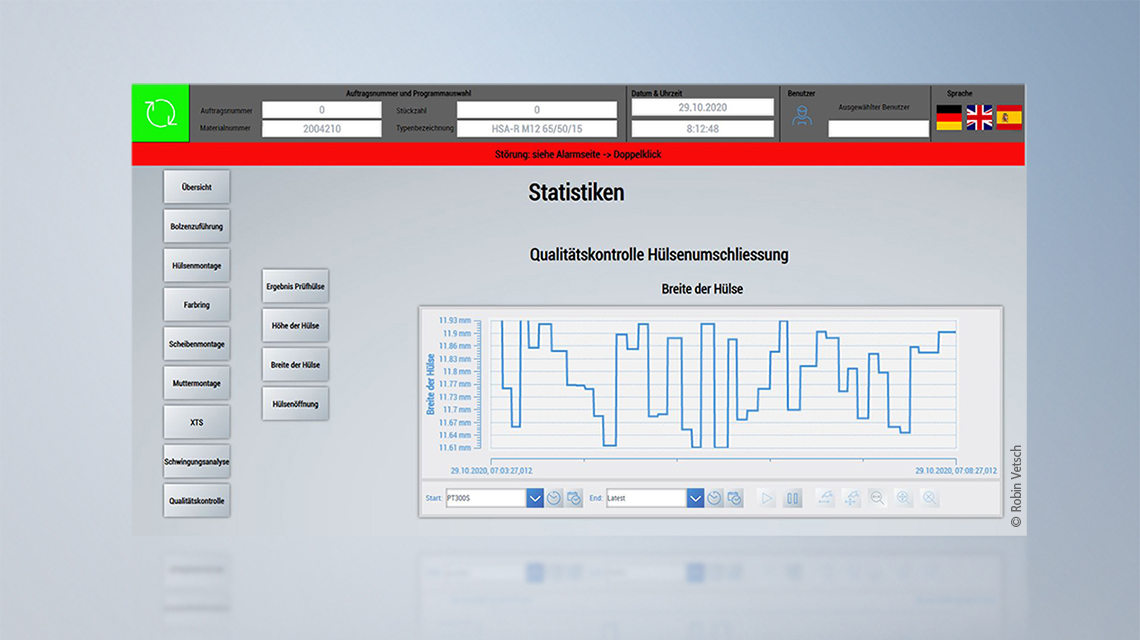

A mechanical bolt anchor essentially comprises the bolt, a washer, a hexagon nut, and a metal sleeve. The frictional forces between the sleeve and the wall of the drill hole ensure sufficient adhesion during use. To apply the normal forces required for the holding force to the drill hole, the sleeve is expanded with the drill hole via the conical head of the metal bolt.

The project, led by R&D engineer Robin Vetsch as part of the OST’s Bachelor of Science in Systems Engineering, focused on the enclosure process, whereby the preformed, punched sleeve encloses the conical neck of the bolt anchor. Only the existing machine data was to be used for quality control – i.e., no installation of additional sensors.

Until this point, the quality of the sleeve around the bolt was mostly checked manually using a test gauge. Now, however, it has been demonstrated that each enclosure can be classified into three different categories (under-enclosed, acceptable, over-enclosed) within the quality specifications. The geometric key data of the enclosed sleeve (sleeve width, height, and opening) should also be predicted with a regression. The 100% inspection of the enclosure process is designed to detect trends or deviations at an early stage.

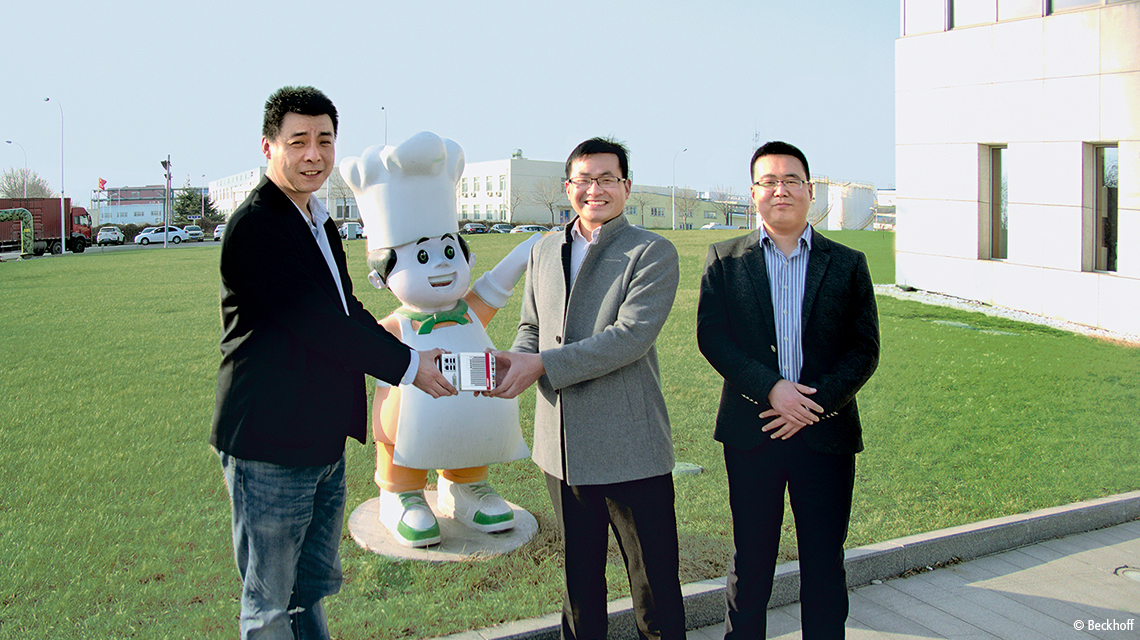

Instant noodles can be found in just about every food store in China. In a bid to reduce the number of products with packaging errors and the associated customer complaints, a large Chinese producer of instant noodles decided to turn to Beckhoff control technology including TwinCAT Machine Learning. This made it possible to perform intelligent and reliable real-time inspection of the packaging quality.

Firstly, the sensor data were acquired via EL1xxx or EL3xxx EtherCAT digital and analog input terminals and TE1300 TwinCAT 3 Scope View Professional. The AI model was then trained via the Open Source Framework Scikit Learn, and the model description file was generated from it. The requisite pre-processing of the sensor data was implemented in the controller using TF3600 TwinCAT 3 Condition Monitoring. In the next step, the corresponding model description file was deployed to a CX51x0 Embedded PC, which runs the AI model in real-time with the help of the TF3800 TwinCAT 3 Machine Learning Inference Engine and outputs the inference results for the detection of faulty products via an EL2xxx EtherCAT digital output terminal. The system openness in particular – a great advantage of the Beckhoff control technology – bore fruit here, because this could be integrated into the existing third-party main controller of the production line without an extreme amount of work.

Products

TE3850 | TwinCAT 3 Machine Learning Creator

The TwinCAT 3 Machine Learning Creator automatically creates AI models based on data sets. These AI models can be optimized in terms of their accuracy and latency to ensure they run efficiently on Beckhoff Industrial PCs with TwinCAT products. The generated models can also be used as standardized ONNX models beyond the Beckhoff product range. For AI model execution with TwinCAT products, a PLCopen XML file with IEC 61131-3 code is created in addition to the model, which describes the complete AI pipeline and can be imported seamlessly into TwinCAT.

TE3851 | TwinCAT 3 Machine Learning Creator Computer Vision

TE3851 TwinCAT 3 MLC Computer Vision is an extension package for the basic TE3850 TwinCAT 3 Machine Learning Creator web application. This extension allows AI models to be created for image processing, e.g. image classification.

TE3852 | TwinCAT 3 Machine Learning Creator Signals and Time Series

TE3852 TwinCAT 3 MLC Signals and Time Series is an extension package for the basic TE3850 TwinCAT 3 Machine Learning Creator web application. This extension enables creation of AI models for signals and time series, e.g. classification, anomaly detection, and forecasting.

TE3860 | TwinCAT 3 Machine Learning Creator Resource Pack

TE3860 TwinCAT 3 MLC Resource Pack is an extension package for the basic TE3850 TwinCAT 3 Machine Learning Creator web application. If more computing time is required, for example for training further AI models, additional computing time can be flexibly obtained via this extension package.

TF3800 | TwinCAT 3 Machine Learning Inference Engine

The TF3800 TwinCAT 3 Function is a high-performance execution module (inference engine) for trained, conventional machine learning algorithms.

TF3810 | TwinCAT 3 Neural Network Inference Engine

The TF3810 TwinCAT 3 Function is a high-performance execution module (inference engine) for trained neural networks.

TF3820 | TwinCAT 3 Machine Learning Server

The TF3820 TwinCAT 3 Machine Learning Server is a high-performance service for executing trained AI models with the option of using hardware accelerators.

TF3830 | TwinCAT 3 Machine Learning Server Client

The TwinCAT 3 Machine Learning Server includes a connection to a local client as standard (local TwinCAT runtime). If (possibly further) TwinCAT runtimes need remote access to a TwinCAT 3 Machine Learning Server, these runtimes must each be equipped with a license for the TF3830 TwinCAT 3 Machine Learning Client.

TF7800 | TwinCAT 3 Vision Machine Learning

TwinCAT 3 Vision Machine Learning provides an integrated machine learning (ML) solution for vision-specific use cases. Both the training and the implementation of the machine learning models take place in real time, and they even help machines to learn sophisticated data analyses automatically. This can be used to replace complex, manually created program constructs.

TF7810 | TwinCAT 3 Vision Neural Network

TwinCAT 3 Vision Neural Network provides an integrated machine learning (ML) solution for vision-specific use cases. The implementation of the machine learning models takes place in real time. With the help of these models, complex data analyses can be learned automatically. This means that complex, manually created program constructs can be replaced.

C6043 | Ultra-compact Industrial PC with NVIDIA® GPU

The C6043 Industrial PC with NVIDIA® GPU handles applications with high demands on 3D graphics or deeply integrated Vision and AI program blocks with minimal cycle times. It extends the series of ultra-compact industrial PCs to include a high-performance device with a built-in slot for powerful graphics cards. With the latest Intel® Core™ processors and highly parallelizing NVIDIA® graphics processors, the PC becomes the perfect central control unit for ultra-sophisticated applications. The Beckhoff TwinCAT 3 control software is capable of mapping this as a fully integrated solution – without any additional software or interfaces. With the additional freely assignable PCIe® compact module slot, the C6043 can be flexibly expanded with supplementary functions.

C6675 | Control cabinet Industrial PC

The C6675 Industrial PC is equipped with components of the highest performance class: Either the Intel® Processor 300 or the Intel® Core™ 3/5/7/9 Series 2 processors are used on an ATX motherboard. The housing and cooling concept adopted of the C6670 also enables the use of a GPU accelerator card, among other things. A total of 300 watts is available for full-length plug-in cards. Applications in the field of machine learning or vision can thus be realized in an industrial environment.